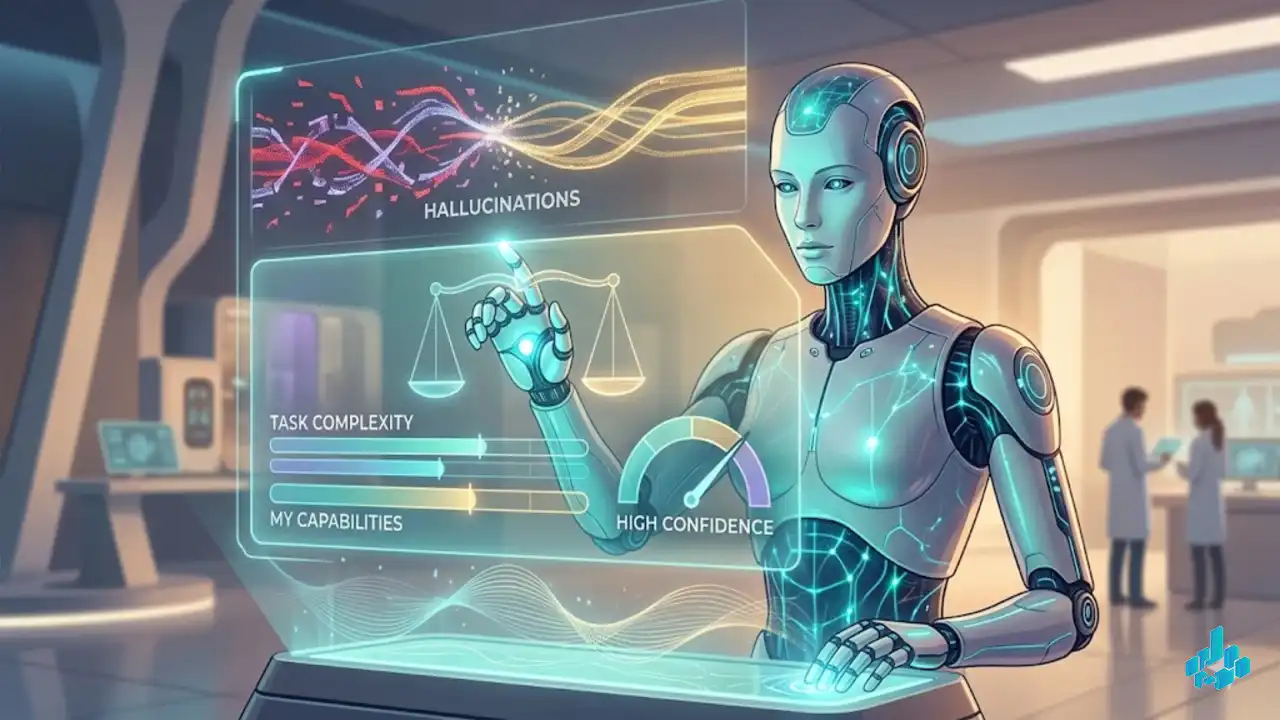

Until now, agents often tried to complete a task even when they lacked the necessary tools (APIs) or context, resulting in the generation of garbage responses. Appier proposes an evaluation metric: before acting, the agent calculates its confidence in success. If the score is low, the model aborts execution and calls an external function (tool call) or directly requests help from the developer. This is a critical patch for Agentic AI, making bot behavior predictable and suitable for production in the B2B sector.

Source: Appier / arXiv / GitHub

ResearchAppierLLM CalibrationAgentic AIMachine Learning