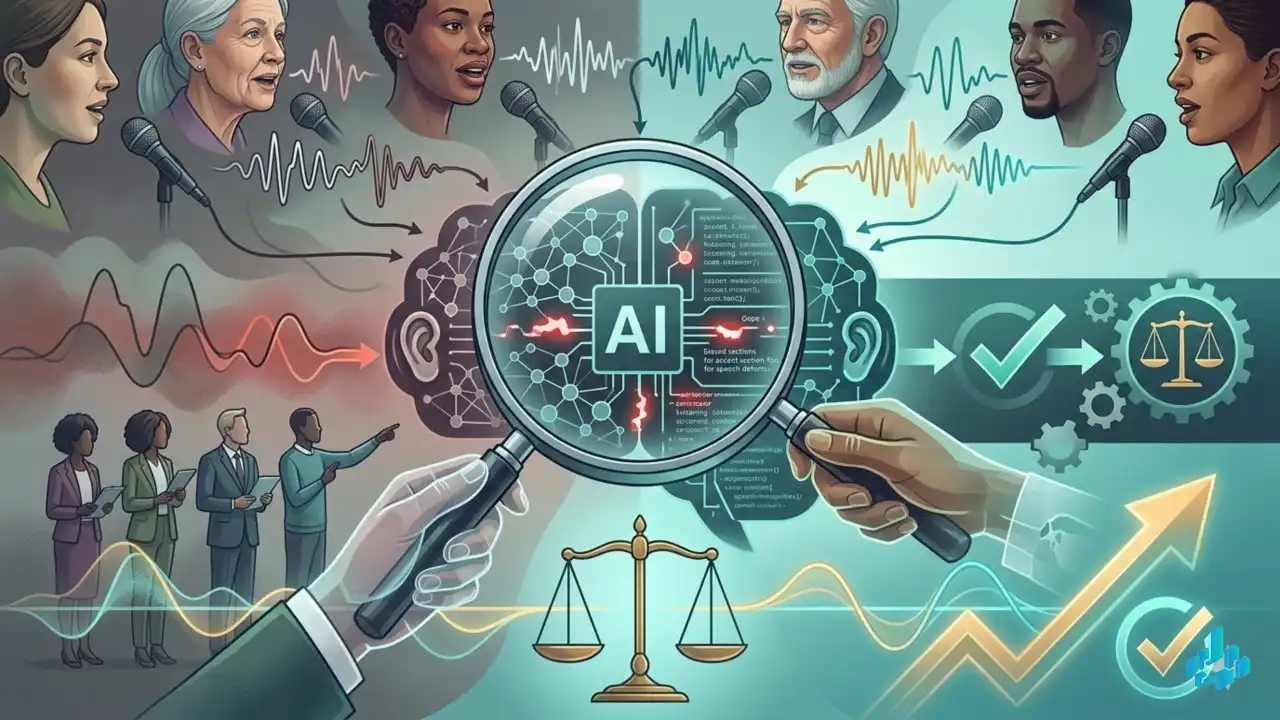

The problem with corporate AI is that benchmarks are written by the developers themselves in sterile conditions. As a result, ASR systems perfectly understand standard English but ignore accents, speech impediments, or dialects of marginalized groups. The publication proposes standardizing external AI auditing by involving real users and community groups. This is a clear signal to regulators (including the authors of the EU AI Act): algorithm safety must be evaluated not by corporate reports, but by inclusivity metrics "in the field."

Source: Nature Machine Intelligence

EthicsASRAuditingRegulationInclusivity